Language Models That Learn from Their Mistakes in the Real World

Your LLM Could Learn From Its Mistakes — If You Let It

TL;DR — Most language models are frozen after training, wasting all the experience they gain from real users. Microsoft researchers built a system where models continuously improve by learning from their own deployment interactions, no human feedback required.

What It Is

Right now, when you deploy an LLM, it's basically frozen in time. It makes mistakes, gets corrections, sees what works — then forgets everything when the conversation ends. Online Experiential Learning (OEL) changes this by creating a learning loop that runs during deployment.

Here's how it works: As your model interacts with users or environments, it collects transcripts of what happened. Then, instead of just storing raw logs, the system extracts "experiential knowledge" — essentially, lessons learned from those interactions. Finally, it trains the model to internalize these lessons so it doesn't need to look them up every time. The improved model goes back into production, collects better experiences, extracts better lessons, and the cycle continues.

The clever bit? This all happens without reward scores, human labelers, or access to the original environment where the model was deployed. It just needs text transcripts of what the model tried and what happened.

Why It Matters

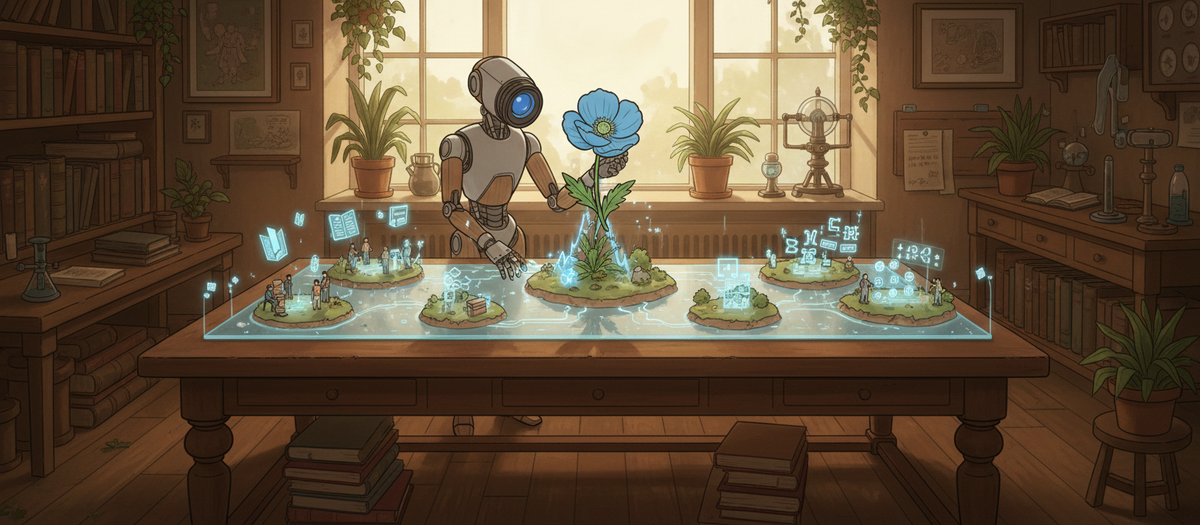

- Your deployment data becomes training data automatically. Instead of the standard "train once, deploy forever" approach, you can continuously improve models using the interactions they're already having. No need to periodically collect human annotations or build elaborate simulation environments.

- It gets more efficient over time, not just more accurate. In their tests, models didn't just improve task performance — they also generated shorter, more focused responses as they learned. You're literally reducing inference costs as the model learns what works.

- It works without the usual RL infrastructure. You don't need reward models, human raters scoring outputs, or verifiable scoring functions. If your environment gives any kind of text feedback (error messages, success confirmations, user corrections), that's enough signal to learn from.

One Thing to Try

Start logging interaction trajectories for your deployed models right now, even if you're not ready to implement online learning. Capture the model's outputs alongside any signal of success or failure — whether that's explicit user feedback, downstream system responses, or task completion indicators. When you're ready to retrain, you'll have a goldmine of real-world experience to learn from instead of relying solely on synthetic data or expensive human annotations.