Teaching Self-Driving Cars to Drive Like You Do

Your Self-Driving Car Should Drive Like You Do

TL;DR — Researchers built an AI driving system that learns your personal driving style (cautious vs. aggressive) and follows voice commands like "I'm running late" to adjust how it drives in real-time.

What It Is

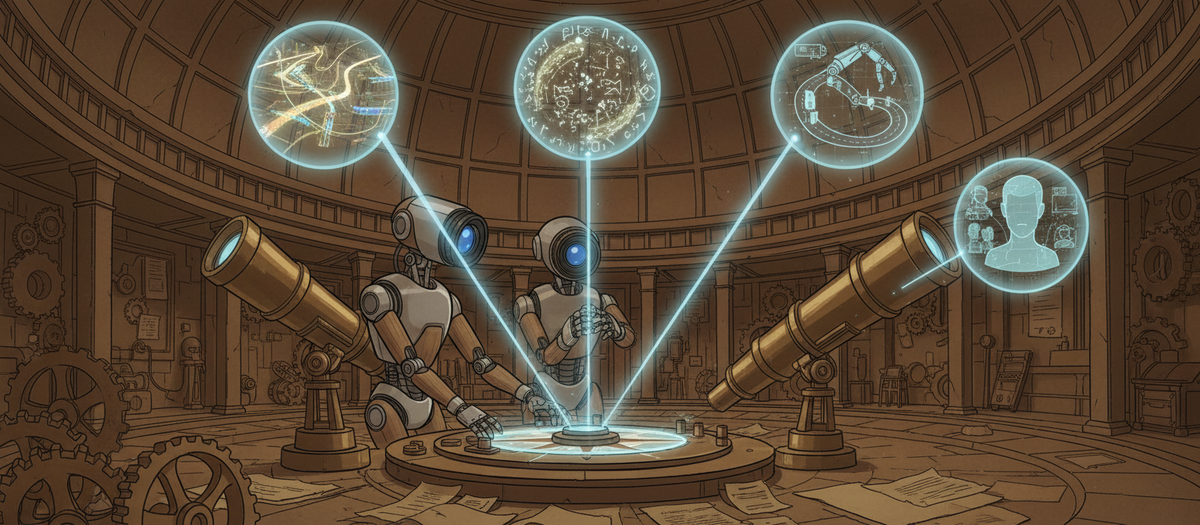

Most autonomous driving systems drive the same way for everyone—optimizing for generic "safe" or "efficient" driving. But real people have different styles: some brake early, others accelerate aggressively, some merge cautiously while others squeeze into tight gaps. Drive My Way (DMW) treats driving preferences like a personalization problem. It learns a unique "user embedding" (think: a numerical fingerprint of your driving habits) by watching how 30 different real drivers handled 20 traffic scenarios. The system then uses this embedding to make driving decisions that match your style, while also listening to natural language commands like "brake gently, I'm feeling carsick" to adjust behavior on the fly.

The key innovation is combining long-term preference learning with short-term instruction following. The model doesn't just pick from preset modes like "sport" or "eco"—it actually learns the nuanced differences in how individuals accelerate, merge, and overtake, then adapts those behaviors based on what you say.

Why It Matters

- Personalization beyond presets: If you're building user-facing AI systems, this shows how to move past rigid configuration options. Instead of offering "Mode A vs. Mode B," you can learn continuous preference spaces and let natural language refine behavior in context.

- User embeddings for policy alignment: The technique of learning per-user embeddings that condition a base policy could transfer to other domains—imagine chatbots that remember your communication style, or coding assistants that match your architectural preferences.

- Bridging habits and instructions: The two-layer approach (stable long-term preferences + dynamic short-term commands) is a pattern worth stealing. Users want systems that "get them" by default but can be nudged with simple requests.

One Thing to Try

If you're building an AI agent that serves multiple users, experiment with learning lightweight user embeddings from interaction history rather than relying solely on explicit settings. Fine-tune your base model to condition outputs on these embeddings—you might find users feel the system "understands them" better without requiring extensive manual configuration.