Training Medical AI to Think Like a Doctor: How Reinforcement Learning Beats Multiple Choice

Teaching Medical AI to Think Out Loud

TL;DR — Researchers built a medical AI that explains its reasoning before answering questions about X-rays and CT scans, using a clever reward system that judges both correctness and clarity without needing millions of labeled examples.

What It Is

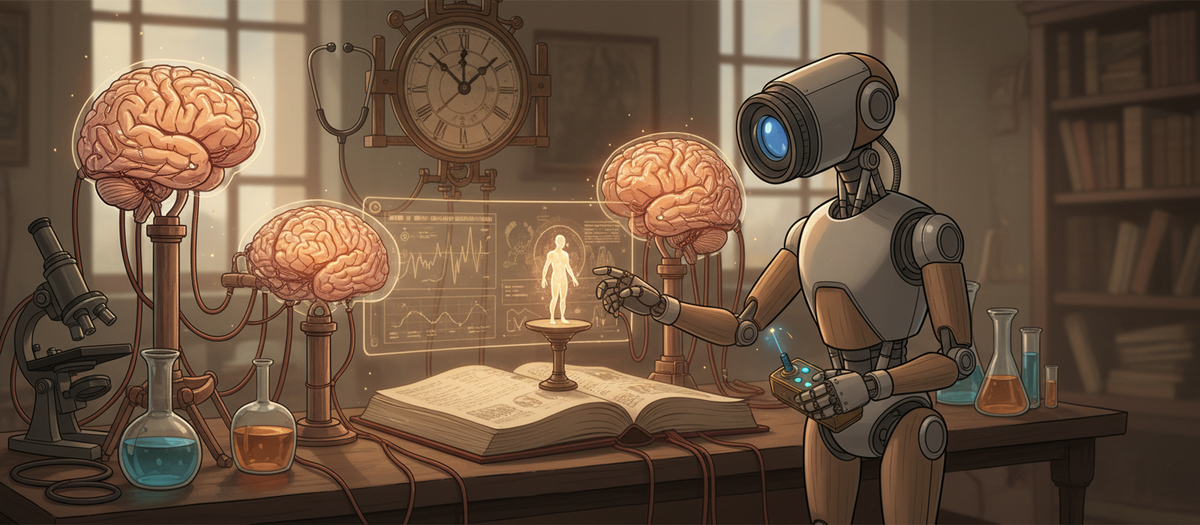

MediX-R1 is a multimodal AI system (meaning it handles both images and text) trained specifically for medical questions. Instead of just spitting out answers, it writes out its reasoning in <think> tags before responding — like showing your work on a math test. The clever part is how they trained it: using reinforcement learning (learning by trial and error with rewards) with four different scoring signals working together. One signal checks if the answer is medically correct using another AI as a judge, another ensures the model uses proper medical terminology, a third rewards clear formatting, and a fourth prevents the model from hallucinating about what type of image it's looking at. With only 51,000 training examples — tiny by AI standards — their 8B parameter model beats a 27B parameter competitor that used way more data.

Why It Matters

- You can train capable medical AI without massive datasets — Most medical AI systems need millions of examples and multi-stage training pipelines. MediX-R1's composite reward approach means you can get better results with 50K examples and a single training stage, making specialized medical AI more accessible.

- Interpretable reasoning becomes enforceable, not optional — By baking the reasoning step into the reward function itself, you get models that consistently explain themselves. This isn't just nice-to-have in healthcare; it's often legally required and builds clinician trust.

- The "LLM-as-judge" pattern works for domains without clear right answers — Unlike coding (where you can run tests) or math (where you can verify calculations), medical answers often have valid variations in phrasing. Using another LLM to judge semantic correctness, combined with medical embeddings that understand terminology, solves the evaluation problem that has blocked RL in healthcare.

One Thing to Try

If you're building domain-specific AI where exact string matching fails but you need reliable evaluation, steal their composite reward approach: combine an LLM judge for semantic correctness with embedding-based similarity (using domain-specific embeddings like PubMedBERT) and lightweight format rewards. This multi-signal design prevents reward hacking — where models exploit a single reward signal — and gives you stable training even with small datasets.