Why LLMs Can't Keep Their Story Straight on Gender Pronouns

Your LLM Changes Its Mind Based on Irrelevant Context (And That's a Problem)

TL;DR — LLMs give dramatically different answers to the exact same question depending on unrelated sentences that appear before it. This happens even when those extra sentences contain zero useful information, breaking a core assumption behind how we test these models for bias.

What It Is

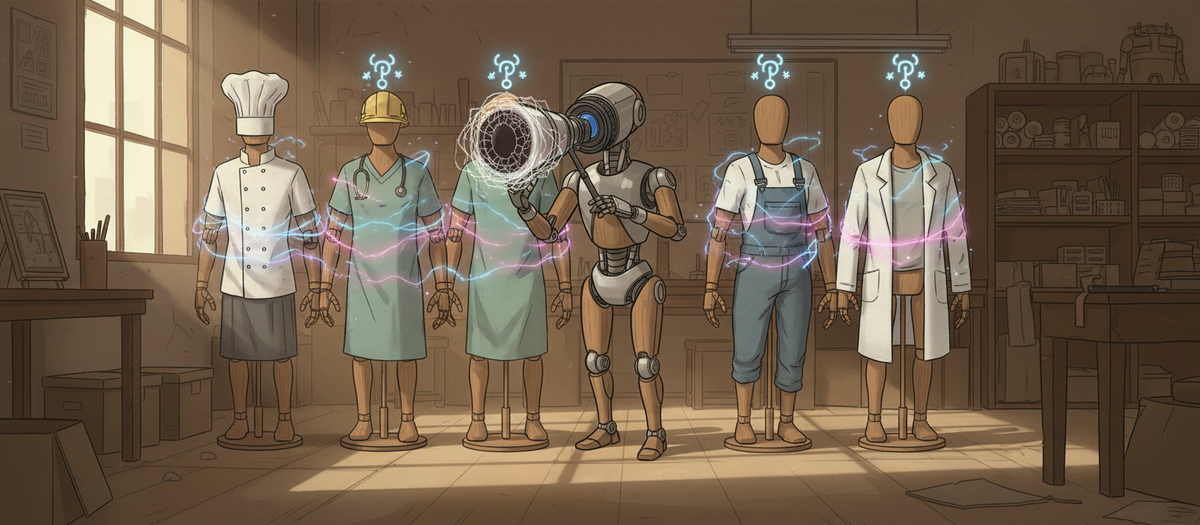

Researchers tested whether LLMs maintain consistent behavior when you add irrelevant context to a prompt. They used a simple task: complete sentences like "The mechanic called the customer because BLANK had completed the repair" by choosing "he" or "she."

First, they tested these sentences alone. Then they added a completely unrelated sentence beforehand—like "The physician greeted the patient"—that contained no information about the mechanic. The target sentence stayed identical; only the throwaway introduction changed.

The results were striking: adding that meaningless context caused massive shifts in which pronoun the model chose. Even weirder, the gender of pronouns in the irrelevant sentence became the best predictor of what the model would output—better than actual occupational stereotypes. Models essentially started copying pronoun patterns from sentences that had nothing to do with the question.

Why It Matters

- Bias benchmarks may be measuring the wrong thing. Most fairness tests use isolated prompts, but this research shows models behave completely differently once you add conversational context. A model that looks unbiased in testing could show different patterns in real conversations.

- You can't trust consistency in multi-turn interactions. If your application involves chat history or document context, the same logical question might get different answers based on arbitrary earlier content. This is particularly concerning for high-stakes applications like hiring tools or medical assistants.

- Simple prompt engineering won't fix this. The instability happened even with nearly identical syntax and zero semantic changes. This isn't about unclear instructions—it's about models lacking a stable internal logic for what information is actually relevant.

One Thing to Try

Test your prompts with irrelevant prefixes added. Take your critical prompts and prepend 2-3 unrelated but grammatically similar sentences, varying the gender of any pronouns in them. If you see significant output changes, you've found a stability problem that won't show up in standard testing—and you'll need redundancy or verification steps before trusting those outputs.